Unlimited Possibilities in A-Parser

We have gathered all the benefits on one page; detailed information on each feature can be found in the documentation.

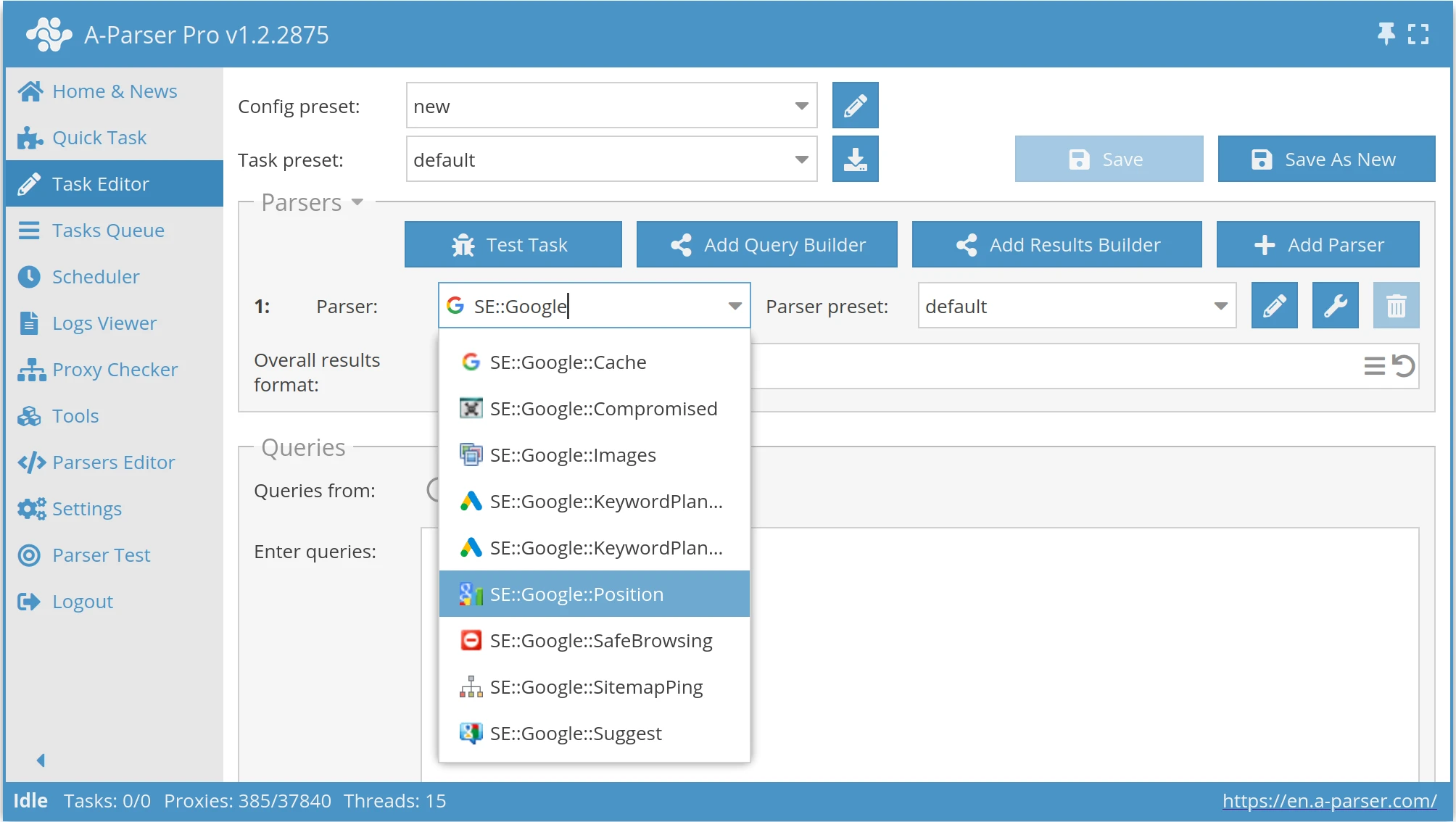

Task Editor

Use up to 20 parsers in a single task, distributing threads evenly to reduce proxy bans and increase parsing speed.

Numerous settings for each parser can be saved into separate presets and reused across various tasks.

Separating input data allows you to change the query format and write additional related data to the results.

A separate query format for each parser within one task, with control over the order of formatting execution.

If you're unsure about the input data, A-Parser ensures no redundant work is done.

Automatic query expansion, substitution of subqueries from files, and iteration through alphanumeric combinations and lists.

The powerful Template Toolkit lets you apply additional logic to results and output data in various formats, including JSON, SQL, and CSV.

Advanced deduplication capabilities guarantee the uniqueness of the strings, links, and domains you receive.

Save only the data that meets your criteria: substring matches, numerical comparisons, or regular expressions.

Use different formats for different files and apply additional conditions and filters, all within a single task to conserve parsing resources.

A detailed log for each thread and each query allows for quick and convenient task debugging.

Extend A-Parser's logic by automatically running different tasks in sequence, passing the results of one task as queries for the next.

Building databases from multiple tasks? Saving deduplication databases ensures you always get only new results.

By running each task with a specified number of threads, you can ensure A-Parser won't exceed your proxy plan or server resources.

Use the debugger to quickly verify a task's operation during its creation, with fast execution and a clear log display.

Task Queue and Scheduler

The task queue frees you from waiting for one task to finish. Add an unlimited number of independent tasks.

Control the number of concurrently running tasks, significantly reducing the total time to get results.

Start, pause, edit, or delete tasks. Resume tasks from where they left off; A-Parser will continue collecting information.

With a large task queue, it's crucial to control which tasks start sooner than others.

Set a global thread limit for all tasks, and A-Parser will automatically distribute threads among active tasks.

Access a complete history of completed tasks, view statistics, and re-add tasks for another run.

Run recurring tasks using the task scheduler with flexible settings for repetition intervals.

Proxy Checkers and Proxy Management

A-Parser works with all proxy protocols, and the proxy checker can test all types simultaneously.

Add separate proxy checkers for different proxy sources, each with its own verification settings.

Manage the number of checking and downloading threads separately for each proxy checker.

Specify proxy access credentials in the proxy checker settings or in proxy lists with separate authorization data.

A-Parser checks proxies for POST method support, anonymity, response time, and other parameters.

If you're confident your proxies are working, you can disable checking to save resources.

For each task, you can select specific proxy sources, allowing for flexible resource allocation.

For even more flexibility, use different proxies within a single task, such as separate proxies for Google and Yandex scrapers.

If a service bans a proxy, A-Parser will stop using it for a specified time, reducing failed requests.

You can limit the maximum number of threads per proxy to avoid overusing its resources.

By default, A-Parser uses a unique proxy for each data download attempt, but this behavior can be changed.

This feature allows you to exclude certain proxies from general use and assign them only to specific tasks.

Flexible Settings

Save groups of settings into different presets and reuse them in various tasks.

For instance, the Google scraper allows specifying page count, results per page, language settings, geolocation, and much more.

Export your settings and parsers to share with others, or import ready-made tasks from our catalog.

Multithreading and Performance

A-Parser is built on a fully asynchronous architecture, capable of running up to 10,000 concurrent asynchronous threads.

A-Parser employs many optimizations for better performance, and we constantly profile and improve our code.

There are no limits on the number of queries, the size of query files, or the number of results.

Most tasks run smoothly on any standard office or home computer, as well as any entry-level VDS.

Currently, A-Parser can efficiently use up to 4 processor cores. A license with unlimited core support is coming soon.

Captcha Recognition

The most popular CAPTCHA recognition software supports many types of CAPTCHAs, including reCAPTCHA v2.

We support integration with a vast majority of services, including Anti-Captcha, RuCaptcha, CapMonster.cloud, 2captcha, and others.

Captcha recognition support is built into all popular scrapers. You can also use it from your own custom JavaScript scrapers.

Developing Presets Based on Regular Expressions

Apply regular expressions to data obtained from the Net::HTTP scraper or the HTML::LinkExtractor spider.

Collect single data points into variables or repeating blocks (lists, tables) into arrays. Output the data in a convenient format using the templating engine.

You can apply additional processing to the source data of all built-in scrapers (e.g., Google search results).

Use regular expressions to find links to the next page of pagination, and A-Parser will automatically navigate through all pages.

Use regular expressions to validate content, check for proxy bans, or detect captchas. A-Parser will automatically retry with another proxy upon failure.

With the result constructor, you can perform search-and-replace operations using regular expressions on any scraping results.

Developing Scrapers in JavaScript

Linear and synchronous code using async/await, which A-Parser will execute in a multi-threaded environment.

A-Parser lets you focus on writing data extraction and transformation code, handling all proxy management and retries automatically.

Write in modern JavaScript (ES2020+) or use TypeScript for strong typing and syntax highlighting.

The vast NPMJS module catalog allows you to extend A-Parser's data extraction and processing capabilities limitlessly.

A-Parser adds proxy support to the popular Puppeteer library, allowing automatic use of different proxies for different tabs.

You can send requests to any built-in or other JavaScript scrapers, enabling the creation of arbitrarily complex logic.

Automation and API

Send HTTP requests from your own programs and scripts, or use our ready-made libraries for NodeJS, Python, PHP, and Perl.

Add tasks by preset name or by providing a full JSON structure with detailed settings.

Gain full control over the task queue, track task statuses, and download results.

Send an HTTP request and receive the results immediately upon completion of the data collection.

Our solution for high-load projects. Connect an unlimited number of A-Parser instances to process API requests in a Redis queue with minimal latency.

For complete automation, a remote update of A-Parser is available through an API call.

Continuous Improvements and Support

The constant evolution of A-Parser provides our users with new capabilities year after year.

We test all built-in scrapers daily and automatically, allowing us to release updates promptly in response to any layout or result changes.

Free technical support is available to all our users and is considered by them to be the best among similar products.

We regularly release educational materials, sample presets and scrapers, as well as tutorial videos on our YouTube channel.

Most new features and scrapers are developed based on requests from our users.

We can save you time by offering custom scraper development on our platform, as well as integration with your business logic and databases.

Choose the Right License

Lifetime license, updates are purchased separately

A-Parser Lite

Basic Google and Yandex scrapers

- Includes Google & Yandex scrapers

- 3 months of updates

- Bonus proxies: 20 threads for 2 weeks

- Support

A-Parser Pro

Access All Scrapers

- Full suite of 110+ scrapers

- Create your own JavaScript scrapers

- 6 months of updates

- Bonus proxies: 50 threads for a month

- Includes all features from the Lite plan

A-Parser Enterprise

Access All Scrapers and API

- API control

- Multi-core task processing

- Redis integration

- Includes all features from the Pro plan

Updates: $49 for 3 months, $149 for a year, or $399 lifetime

Paid Solutions

Custom Scraper Development

We believe any data can be scraped.